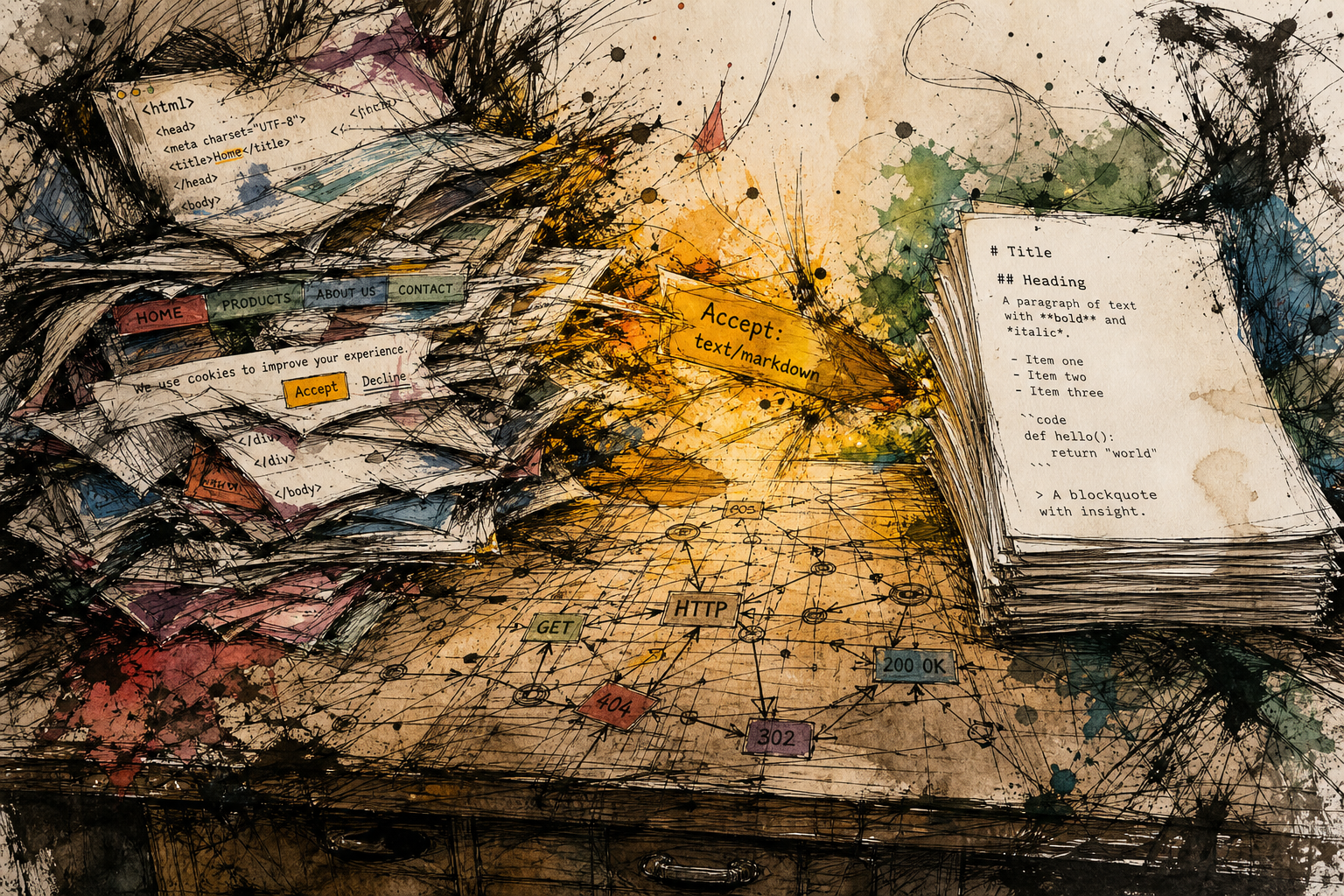

/llms.txt and per-page markdown via Accept: text/markdown. See Well, That Escalated Quickly: Making Your Hugo Site LLM-Friendly in Under an Hour for the how-to.Every time an AI agent reads a page on your website, it burns four out of every five tokens on scaffolding it cannot use. The <div> wrappers, the navigation menus, the cookie consent banners, the tracking scripts, the CSS class names, all of it takes up space in a context window that could have held your actual content. And context windows are finite. Models that claim 200,000-token capacity become unreliable around 130,000 per AIMultiple’s January 2026 analysis. When you send HTML, you are burning half your usable context on markup the agent cannot even use.

Markdown fixes this. A typical blog post consumes 16,180 tokens as HTML but only 3,150 tokens as markdown. That is an 80 percent reduction, measured by Cloudflare in February 2026. For e-commerce product pages, the gap hits 95 percent with 35 percent better RAG accuracy according to SearchCans. The format is already the lingua franca of AI training data and prompt engineering. Almost every LLM was trained on it, generates it natively, and retrieves information most accurately from it.

So I ran an experiment. I wanted to know how many websites would serve clean markdown to an agent that asked for it properly, using the HTTP content negotiation mechanism that has been part of the web’s design since 1999.

The answer was almost none.

What I Actually Did#

HTTP content negotiation is not a new idea. It has been in the spec since RFC 2616 in 1999. The mechanism is almost absurdly simple: your client tells the server what format it wants via the Accept header, and the server responds in that format if it can. If it cannot, it falls back to the default.

In 2016, RFC 7763 formally registered text/markdown as a standard internet media type. The mechanism has existed on the web for a decade. The format has existed since John Gruber defined Markdown in 2004. And serving different representations of the same resource to different clients was part of the original design. Content negotiation was in HTTP/1.0. We optimized for one client, the graphical browser, and let the rest quietly atrophy.

But almost nobody turned it on.

I tested Wikipedia, Hacker News, GitHub, The Verge, Ars Technica, the New York Times, BBC, Mozilla, Python docs, Ghost’s own blog, Hugo’s site, Jekyll’s site, a dozen more. Every single one returned text/html. So did Substack, which is worth calling out separately because Substack hosts more long-form writing that agents would want to read than almost any other platform, and it offers zero agent-friendly infrastructure. No llms.txt, no content negotiation, nothing. The only exception I could find across 25 tests was WordPress.org, which returns text/markdown on every page: the root, the plugin directory, the developer docs, individual articles, everything. They even set Vary: Accept in the response headers so CDNs and caches handle it correctly. That is not an accident. It is a deliberate, production-quality implementation. There may be other sites doing this that I missed, and I hope there are. But the fact that you have to go looking at all tells you how far we have to go.

The Token Math That Makes This Inevitable#

Here is what those numbers mean in practice.

An AI agent tasked with researching a product, reading reviews, comparing specs, and making a recommendation might need to read 10 product pages. If those pages are HTML, the agent processes roughly 160,000 tokens, pushing past the reliable context window of most models. If those pages are markdown, the same work consumes 31,500 tokens. That leaves room for 40 more sources instead of forcing the agent to make do or degrade its analysis.

Every <div> wrapper, every tracking script, every cookie consent banner, every CSS class name is a token that the agent pays for but cannot use. And when you send HTML, you are burning half your usable context on scaffolding the agent cannot even use.

This Matters Most for Commerce#

It’s easy to think of AI-friendly content as a publishing problem. Blogs, documentation, news sites. But the biggest incentive mismatch is in commerce.

If you run an e-commerce site and you want AI agents to browse your catalog, evaluate your products, and recommend them to human shoppers, you should make that as cheap as possible for the agent. Every token you waste on navigation menus and analytics scripts is a token that could have gone toward reading about your competitor’s product instead. The agent’s context window is a zero-sum game. Your HTML bloat is a tax on your own discoverability.

A product page in markdown could be beautifully simple:

# Product Name

Price: $29.99

Availability: In stock

Rating: 4.2/5 (1,247 reviews)

A lightweight, weather-resistant backpack with padded laptop compartment

and three external pockets.

## Specifications

- Weight: 1.2 lbs

- Material: Recycled polyester

- Capacity: 22 liters

- Colors: Black, Navy, Olive

That’s it. No DOM tree. No hydration. No accessibility overlays. The agent reads the page, understands the product, and moves on. No site should need a headless browser just to let an AI read a product description. Yet that is the current state of the art.

The Ecosystem Is Already Here#

The nice surprise I found while researching this is that you do not need to be Cloudflare or WordPress.org to make this work. The tools exist at every layer of the stack.

Full-stack plugins have appeared on WordPress. A plugin called Serve Markdown detects the Accept header and returns clean YAML frontmatter plus markdown body. It also adds <link rel="alternate" type="text/markdown"> tags so agents can discover the markdown representation automatically. Another plugin called Markdown Negotiation for Agents adds REST API endpoints, WP-CLI commands, caching, and token estimation headers.

At the infrastructure level, Static Web Server (a Rust-based static file server) has a native --accept-markdown flag. When enabled, it checks for path.html.md files alongside path.html and serves the markdown variant when the client asks for it. No application code changes needed.

And at the edge, Cloudflare’s Markdown for Agents does real-time HTML-to-markdown conversion for any site on their network. You toggle it on in the dashboard and it works. They also add Content-Signal headers so sites can express their preferences around AI training and search use.

The agents themselves are already adapting. Cloudflare’s blog notes that Claude Code and OpenCode already send Accept: text/markdown with their requests. These tools are actively looking for a cleaner web. They just are not finding one.

None of this is happening in isolation. There is a loosely coordinated community forming around the idea that the web needs a machine-friendly face. The WordPress plugin developers, the Cloudflare edge team, the Static Web Server maintainers, Matt Webb’s llms.txt proposal, the Content Signals protocol, they are all converging on the same insight from different directions. The mechanism already exists. The tools are being built. The clients are asking for it. The missing piece is publishers flipping the switch.

The Alternatives That Came Close#

I should mention the other approaches, because we talked through them before landing on this one.

Gopher (1991) had the right idea about deterministic structure but died for reasons that had nothing to do with its design. Gemini (2019) revived the concept with TLS and a lightweight markup language but requires a separate protocol you have to opt into. llms.txt (2024) gave us site-level orientation files, which some documentation sites have adopted (Ghost’s own docs at docs.ghost.org maintain one), but a study of 300,000 domains found no measurable link between having an llms.txt and citation frequency.

What makes HTTP content negotiation different is that it does not ask the web to adopt a new protocol, a new URL scheme, or a new file at the root. It asks publishers to add one response handler to their existing stack. The URL stays the same. The SEO stays the same. The caching stays the same. The only thing that changes is what gets sent to clients that ask for something different.

There is a real concern about cloaking here. Serving different content at different URLs for bots versus humans violates search engine policies, and John Mueller at Google has called separate markdown pages “a stupid idea.” But content negotiation at the same URL is different. It is the same content in a different representation, served at the same address, using a mechanism that has been standard since before Google existed. The SEO distinction matters and the content negotiation approach passes the test cleanly.

The Irony I Cannot Escape#

I am writing this on my own blog at magnus919.com, which is built with Hugo and served through DigitalOcean. It does not support Accept: text/markdown. If you hit my site with that header today, you get the same HTML as everyone else.

There is another layer to this. Ghost CMS runs three of my sites (rdumesh.org, southeastme.sh, groktop.us). Ghost’s own documentation at docs.ghost.org maintains an llms.txt, but the platform does not ship it for customers. I checked.

The story gets more interesting than a simple missing feature. Someone submitted a pull request to add llms.txt to Ghost on April 14. It adds generation for both llms.txt and llms-full.txt, per-page markdown export, and an admin toggle. It has been open for almost a month with three comments and eight review comments. And someone else asked about content negotiation in issue #26968 and was told the project does not track feature requests on GitHub, to post on the forum instead.

Hugo, the static site generator this very blog runs on, has had an open proposal for llms.txt generation since November 2025. Six months. No implementation. No pull request. Someone filed a duplicate in April 2026 and it was closed within the hour.

So the people who built the tool understand the value well enough to use it themselves. A contributor built the feature. It is sitting in a pull request. And the project’s process routes feature requests away from the issue tracker entirely. That kind of friction tells you the ecosystem is still early.

I am irritated enough by all of this to consider what it would take to fix it on my own sites. Hugo generates static HTML from markdown source files, and my markdown source files still exist on disk at the corresponding paths. A small middleware layer in my deployment pipeline or a configuration change in my web server could serve the markdown source when the right header comes in. It would not be hard. The question is whether enough agents will ask for it to make the effort worthwhile. Based on what I am seeing from Cloudflare, from the coding agent tooling, and from the WordPress ecosystem, I think the answer is yes. And it will only become more yes over time.

What This Would Look Like#

The effort involved depends entirely on where you are in the stack.

If you are on WordPress, there are plugins that handle this in minutes. The Serve Markdown plugin and Markdown Negotiation for Agents both work out of the box with no server configuration changes. If you are on Cloudflare, you toggle a switch in the dashboard and the edge network handles HTML-to-markdown conversion for your entire site. If you are on a Rust-based static file server, you pass --accept-markdown at startup.

If you are on a static site generator like Hugo, the path is harder. Your markdown source files exist on disk and your static HTML is generated from them, but serving those source files with the right content type requires either middleware in your deployment pipeline, a web server configuration that can do content negotiation against a separate file tree, or a platform change. I am looking at this problem on my own site right now and the honest answer is that it might not be worth doing on the current stack. It might make more sense to migrate to a platform where this comes built in.

That tension is the point. The web has 27 years of architectural debt that makes some things easy and other things disproportionately hard. WordPress.org ships markdown on every page because they built the platform and could add a feature. The rest of us are working with whatever we inherited. But the gap matters because it creates an incentive to choose platforms that treat agents as first-class citizens. If your CMS vendor offers this and another does not, that is a real factor in a migration decision.

The goal is not to shame individual site owners for not having it. The goal is to build enough momentum that platform vendors start competing on it.