AI at Work Isn’t Stealing Jobs. It’s Stealing Something Worse.

Table of Contents

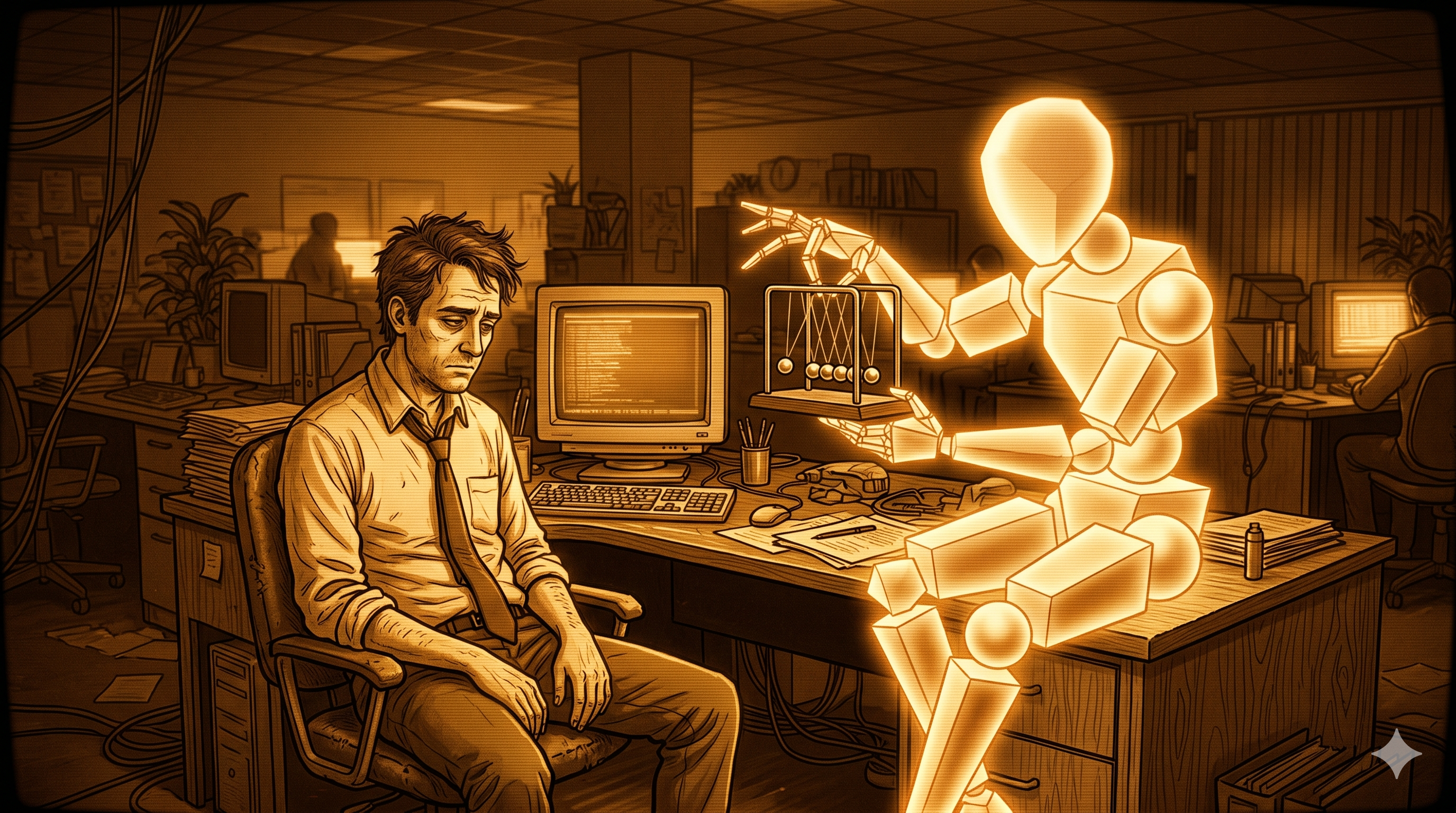

There is a version of the AI-at-work conversation that mostly takes place in op-eds and earnings calls, and it goes like this: AI will either eliminate jobs or it won’t, and the truth is probably somewhere in the middle. That conversation is fine, as far as it goes. But I think it misses the thing that people actually feel when AI gets woven into their daily work. The thing they feel isn’t fear of being replaced. It’s something quieter and harder to name.

They feel less like themselves.

That’s not a complaint you can easily file a ticket for. But it maps pretty closely to what decades of research on meaningful work has identified as the conditions under which people actually feel satisfied and engaged. And it turns out AI, deployed carelessly, has an uncanny ability to hollow out exactly those conditions, one quiet automation at a time.

What Makes Work Feel Like Yours#

The clearest framework I know for understanding why work matters comes from self-determination theory, which holds that people need three things to experience genuine motivation: autonomy, competence, and relatedness. These aren’t nice-to-haves. They’re the psychological substrate of whether your work feels meaningful or like a sentence you’re serving.

Autonomy is the sense that you’re making choices, not just executing instructions. Competence is the feeling that you’re genuinely good at something and getting better. Relatedness is the web of actual human connection that forms around work: the mentor who pushes you, the colleague who gets it, the user whose problem you solved in a way that mattered to them.

When all three are present, work gives people something they can’t easily get anywhere else: a narrative of their own growth, a sense of mattering, a reason to care about tomorrow. When one or more is stripped out, work becomes a thing you endure.

AI, introduced into a workplace without much thought about what it touches, tends to hit all three.

The Autonomy Problem#

The most direct attack is on autonomy. Research on algorithmic management is now fairly clear that when AI systems move into decisions about pacing, prioritization, task assignment, and quality evaluation, something shifts. Work stops feeling like something you do and starts feeling like something that happens to you. The choices migrate upward, or sideward, or into a model — and the worker becomes the hands that execute what the system decided.

This is a different experience than using a powerful tool. A good tool extends your judgment. An algorithmic manager replaces it. The difference matters enormously to the person doing the work, even when the outputs look identical from the outside.

The Competence Trap#

The competence problem is subtler, and in some ways more troubling. AI assistance can feel good at first precisely because it removes friction from hard things. The hard things, it turns out, were doing a lot of work. Struggling through a difficult problem, failing at something, adjusting and trying again — this is the mechanism by which expertise actually forms. It’s uncomfortable and it’s essential.

A 2025 study on AI dependence in knowledge work found that passive reliance on AI tools — where people consume AI output rather than actively engaging with the problem — undermined self-efficacy, reduced psychological ownership of the work, and decreased the sense that the work was meaningful. What’s striking is that these effects persisted even after subjects returned to working without AI assistance. The hollowing out wasn’t temporary. The skill feedback loop, once interrupted, doesn’t just resume.

There’s a real difference between using AI as a thinking partner and using it as a substitute for thinking. The first can make you sharper. The second makes you less capable over time, and most people don’t notice the difference until they’re a fair way down the road.

When Relationships Reorganize Around Predictions#

The relatedness dimension is the one that gets talked about least, probably because it’s the hardest to quantify. But it’s real. When AI systems optimize for throughput and reduce coordination overhead, they tend to route around the human interactions that were doing more than just coordinating. The impromptu conversation where someone learned something. The review cycle where a senior engineer explained not just what was wrong but why. The collaboration that was inefficient but produced people who were genuinely better at their work.

Work organized around prediction and optimization tends to reduce the opportunities for self-development and interpersonal connection that give people reasons to stay and grow. The efficiency gain is real. The cost is paid in a currency that doesn’t show up on dashboards.

The Invisible Ceiling#

Alongside these three, there’s a fourth condition that matters: whether you can see why your work matters. Call it beneficence, or just the sense that what you do has a real effect on real people. Meaningful work research treats this as a distinct need, not just a byproduct of the other three. People need to feel that their work matters to someone, not just that they performed it correctly.

AI can abstract away the recipient. When you’re reviewing AI-generated outputs for quality rather than solving a customer’s actual problem, the connection between your effort and its human consequence gets fuzzy. You’re no longer doing the thing; you’re auditing whether the thing was done. For a lot of people, that distinction drains something significant out of the work.

What This Actually Looks Like in Practice#

I want to be careful not to make this sound more abstract than it is. The people experiencing this aren’t usually lying awake analyzing self-determination theory. They’re just noticing things. The job feels less interesting. The problems feel less like problems worth solving. There’s a flatness to days that used to have texture.

Research on algorithmic control in physical work contexts found reduced well-being driven by increased demands under automated systems — the time pressure and cognitive load that came from working inside a system that doesn’t negotiate. Knowledge workers face a version of this too: the quiet technostress of adapting to tools that change constantly, the opacity of systems that make consequential decisions without explanation, the sense that you could escalate but there’s nothing to escalate to.

There’s also a dignity dimension here. When a system makes a decision about your work that you can’t contest or even fully understand, it erodes something that sits beneath motivation entirely. The basic experience of being treated as a person with judgment, rather than a variable in an optimization function.

Researchers studying AI and psychological needs at work describe this as a threat to the core psychological infrastructure that makes work viable. Not the surface-level experience of whether you like your job, but the deeper question of whether work is still a context in which you can develop, connect, and contribute as an agent rather than a component.

The Design Choice Nobody Is Making#

Here is the thing. None of this is inevitable. The way AI gets deployed at work is a design choice, made by a surprisingly small number of people, often with very limited consideration of what the work actually means to the people doing it.

There’s a version of AI-assisted work where the model absorbs the routine and returns the interesting. Where automation handles what was already rote and humans retain authorship over what required judgment. Where the feedback loops that build competence are preserved rather than bypassed. Where the relational texture of collaboration is maintained, not optimized away.

That version exists. It just requires that the people designing these deployments care about something other than throughput.

Most of us who work in or around technical teams have seen both kinds. The difference isn’t usually the technology. It’s whether the people making implementation decisions thought seriously about what the work was doing for the people doing it; not just what the work was producing.

The job-elimination question will sort itself out over time. The hollowing-out question is happening now, at scale, mostly unexamined. And the cost isn’t showing up in unemployment figures. It’s showing up in the quiet deflation of people who used to find their work genuinely meaningful and are now struggling to remember why they cared.